George Bo-Linn

Pascale Carayon

Peter Pronovost

William Rouse

Proctor Reid

Robert Saunders

Urgent Change is Needed to Improve Health Outcomes at Lower Cost

Although the American health system has islands of excellence, it currently performs below its potential in several dimensions, with uneven patient safety, escalating costs and stagnant productivity, and inconsistent use of scientific evidence (IOM, 2001, 2012; Kocher and Sahni, 2011a). Even though overall health care expenditures have continued to grow, several studies suggest that up to 30 percent of health care expenditures is unnecessary or wasted (Farrell et al., 2008; IOM, 2010, 2012; Martin et al., 2012; Wennberg et al., 2002). Furthermore, evidence is inconsistently applied to clinical care, with patients receiving evidence-based care recommended by guidelines only half of the time (McGlynn et al., 2003).

One particular concern is the incidence of patient harm, which remains far too common. Research in the past decade estimates that up to 20–30 percent of hospitalized patients experience harm during their stay (Classen et al., 2011; Landrigan et al., 2010; Levinson, 2010, 2012). Patients can experience multiple harms in each health care interaction, especially in critical or complex environments and across care settings. Beyond traditional measures of harm, patients are often at risk for another type of harm: the loss of dignity and respect. In one survey, almost half of all patients report concerns about the patient-centeredness of their health care encounters, such as being listened to, having information explained clearly, being shown respect, and receiving sufficient time for their health concerns (Schoen et al., 2006).

These quality and safety shortfalls occur even as clinicians expend considerable time and effort caring for their patients. The problem is not with the individuals working in the health care enterprise, but with the design and operation of the multiple systems in health care. As currently designed, these systems depend on the heroism of clinicians to ensure patient safety and promote care quality. At the same time, they add unnecessary burdens to clinical workflows, silo care activities, and divert focus from patient needs and goals.

Efforts to address these concerns are hampered by several implementation challenges, including a limited capacity for measurement. For example, improving safety has been impeded by the lack of agreement on a definition of patient harm. Multiple definitions are currently in use, which has led to divergent estimates on the proportion of care delivered safely (Classen et al., 2011; Pham et al., 2013). The lack of standardized definitions and standard metrics has also challenged institutions seeking to improve their care processes and compare their performance to others.

Implementation efforts are further challenged by the complexity of modern clinical care, which strains individual human capacity (IOM, 2012). To exemplify the extent of complexity, an average intensive care unit (ICU) patient requires 200 clinical interventions every day, which is beyond the capabilities of any individual care provider to manage (Donchin et al., 1995). Furthermore, this same provider may have to monitor and react to up to 240 vital sign inputs for these critical care patients (Donchin and Seagull, 2002). Complexity is not limited to hospital environments. A 2008 study of a large multispecialty practice in Massachusetts found that the average primary care physician managed 370 unique primary diagnoses, each associated with a set of evidence-based practices; 600 unique medications; and approximately 150 unique laboratory tests (Semel et al., 2010). The complexity of health care extends beyond these specific examples to permeate all aspects of clinical care, and highlights the need for systems approaches to delivering care.

New technologies, such as electronic health records, introduce great potential for better managing complexity. But in order to make gains in quality, safety, or cost, technological interventions require thoughtful execution, implementation, and coordination. Applied without forethought, new technologies may even exacerbate existing inefficient care processes or create new problems. A new technology could add unnecessary steps to clinical workflows, thereby lowering efficiency, or be poorly designed, thus becoming a source of errors and potential safety challenges. For example, even though health care has increased its investment in health information technology (IT) in recent years, the expected gains in productivity and patient outcomes have not been seen (Kellermann and Jones, 2013; Kocher and Sahni, 2011b). The potential gains are great—health care’s cost challenge could be substantially reduced if health care achieved just half the productivity gains from IT as the telecommunications industry (Hillestad et al., 2005; Kellermann and Jones, 2013). There are multiple reasons that these gains have not occurred, such as the lack of interoperability, yet a major reason is that care processes have not been redesigned to take advantage of the efficiencies offered by health IT.

As illustrated above, the solution to the quality, safety, and value challenges in the health care system is to understand and address the underlying broken processes, and to take a systems approach in doing so (Hoffman and Emanuel, 2013). Moreover, given the complexity of modern clinical care, initiatives that simply add to a clinician’s current workload are unlikely to succeed. Rather, significant and sustainable improvement requires reconfiguring the environment, systems, and processes in which health care professionals practice (Carayon et al., 2006; IOM, 2012). As a result of doing so, a systems approach can reduce the burden of work that clinicians face while providing improved safety, quality, and value.

This paper examines systems solutions to health care delivery with case studies of successful systems-based interventions in health care and other sectors of the economy. The paper limits itself to care delivery because of the multiple opportunities to improve care, the greater traction for solutions from a limited scope, and the availability of results demonstrating the impact of systems approaches. Although this paper specifically examines health care, systems approaches are equally applicable throughout the broader health system to produce better health at lower cost.

A Systems Approach has Improved Quality and Value in Other Industries

Many other sectors of the economy have utilized systematic, evidence-based engineering approaches to achieve striking results in quality, efficiency, safety, and other aspects of operations. These methods are diverse, including strategies drawn from systems engineering, industrial engineering, human factors engineering, and operations research. They leverage multiple types of tools, including statistical process controls, supply chain management, usability evaluation, and modeling and simulation. By using systems approaches, industries have been able to coordinate operations across multiple sites, coordinate the delivery and management of supplies, design usable and useful technologies, and provide consistent and reliable processes (Agwunobi and London, 2009; IOM and NAE, 2011; IOM and NAE, 2005).

Perhaps the most visible and transformative application of systems engineering for improved performance is found in aviation. Aviation has made substantial strides in improving safety with a systems approach. Its first strides in safety resulted from improving the mechanical components of the planes and ensuring that all technologies were supported by redundancies. However, even with these mechanical improvements, aviation accidents still occurred. To eliminate these residual safety problems, the industry had to address human factors. This meant building systems that corrected or mitigated the inevitable human error. The tools for accomplishing this included checklists to promote reliability and provide shared mental models, crew resource management to encourage communications and support a team approach, and general human factors engineering tools to improve the ease of use for cockpit controls and information displays (Nance, 2011; Wiegmann and Shappell, 2001). Under this approach, airline safety statistics have improved dramatically. The number of fatalities that have occurred for domestic commercial airlines has fallen from 2.1 per 100,000 aircraft departures in 1980 to none in the period from 2007 to 2010 (Bureau of Transportation Statistics, 2011).

Aviation is of course not alone in applying systems methods. Another notable example, taken by multiple industries, is to apply management systems to their operations. These management methods, such as Six Sigma, lean, production system methods, Total Quality Management, and others, provide a systematic approach to addressing problems and continuously improving operations. By using such methods, industries have been able to improve both the quality and value of their operations (Chassin and Loeb, 2011; Hammer, 2004; Kaplan and Patterson, 2008; Kenney, 2008).

Another example can be found in automobile manufacturing, such as with the Toyota production system. The Toyota production system breaks complex processes down into discrete steps with clearly defined order and responsibilities (Bohmer, 2010; Kenney, 2011). Routine communication is also standardized, such that expectations and timelines are consistent and unambiguous. Moreover, the system defines processes that link all the tasks and communications together for a given product or service to further reduce ambiguities. When conflicts or ambiguities do arise, designated teachers assist workers in learning to identify and correct inefficiencies, encouraging a culture of continuous learning within a highly structured and transparent process (IOM, 2012; Spear and Bowen, 1999).

Naval nuclear aircraft carriers provide another example of system approaches to produce high reliability, as safety on the carrier flight deck is challenged by a hazardous, fast-paced, and extremely complex environment (Rochlin et al., 1987). In order to produce safe operations, multiple types of workers—air traffic controllers, dispatchers, ground crews, and others—must seamlessly work together, make decisions in real time based on information provided by other teams, and continually monitor each other’s work for potential safety problems (Baker et al., 2006; Roberts and Rousseau, 1989). This must all be accomplished in short time frames, with planes launching or landing every 30–60 seconds (Roberts and Rousseau, 1989; U.S. Navy, 2013), and any failure having catastrophic consequences. In order to manage under such conditions and achieve low rates of failure, aircraft carriers and other high-reliability industries have had to adopt certain practices. Their operations exhibit collective mindfulness, in which everyone understands the importance of safety and continually searches for any changes that could challenge safety; they use robust process improvement tools to eliminate any potential deficiencies; and their leadership and culture encourages trust, communication, and the need for continuous improvement (Chassin and Loeb, 2011).

A Systems Approach Could be Similarly Transformative for Health Care

Although other industries often have achieved striking successes from systems approaches, health care overall has been slow to adopt such approaches and techniques. System approaches have applicability for a variety of issues facing the health and health care system, including improving patient safety; preventing disease with a community-based approach; enhancing coordination and communication between care team members; managing the growing complexity of biomedical evidence and diagnostic and treatment options; and continually improving the quality, value, and outcomes of care.

Drawing from experiences with systems approaches in other industries, a systems approach to health is one that applies scientific insights to understand the elements that influence health outcomes; models the relationships between those elements; and alters design, processes, or policies based on the resultant knowledge in order to produce better health at lower cost.

In effect, four general stages are represented in the approach:

- Identification: Identify the multiple elements involved in caring for patients and promoting the health of individuals and populations.

- Description: Describe how those elements operate independently and interdependently.

- Alteration: Change the design of organizations, processes, or policies to enhance the results of the interplay and engage in a continuous improvement process that promotes learning at all levels.

- Implementation: Operationalize the integration of the new dynamics to facilitate the ways people, processes, facilities, equipment, and organizations all work together to achieve better care at lower cost.

Systems Approach to Health: A Working Definition

A systems approach to health is one that applies scientific insights to understand the elements that influence health outcomes; models the relationships between those elements; and alters design, processes, or policies based on the resultant knowledge in order to produce better health at lower cost.

Two issues are particularly salient in this respect: 1) because health care alone does not necessarily translate to improvement in health, there is a need to integrate all the systems and subsystems that influence health; and 2) separately optimizing each component does not optimize the overall system results. For example, optimizing health IT systems for one group of tasks is not as effective as optimizing the technology to support high-quality, high-value care processes across the board (Walker and Carayon, 2009).

Another important consideration is the decentralized nature of American health care with many independent, yet interconnected, stakeholders. This means that the overall system cannot be understood by only examining individual stakeholders, as new properties emerge from the stakeholders adapting and interacting with each other. There are various conceptual frameworks that describe this unique behavior, including complex adaptive systems, systems of systems, and ultra-large-scale systems, that can help describe how the system behaves (IOM, 2001, 2011; Sage and Cuppan, 2001). These theoretical frameworks are useful in highlighting potential unintended consequences to new policies or describing the resilience or capabilities of the entire system.

During the past several years, the Institute of Medicine (IOM) and the National Academy of Engineering (NAE) have sponsored a number of activities aimed at better understanding and mapping the ways systems engineering principles might accelerate progress toward a more efficient and effective health system. Three prominent examples include

- Building a Better Delivery System: A New Engineering/Health Care Partnership. This study focused on engineering tools and technologies that could help the health system improve along the six domains of quality outlined by Crossing the Quality Chasm: A New Health System for the 21st Century. It found that the health system had not taken advantage of potentially transformative engineering strategies and technologies, even though those tools had revolutionized quality, productivity, and overall performance in other sectors (IOM and NAE, 2005)

- Systems Engineering to Improve Traumatic Brain Injury Care in the Military Health System. This workshop identified opportunities to apply engineering tools for improving care for patients with traumatic brain injury. These engineering tools can be applied to model, analyze, design, and structure care in all settings, including the battlefield, military health facilities, Veterans Health Administration facilities, and private providers (IOM and NAE, 2009).

- Engineering a Learning Healthcare System: A Look at the Future. This workshop explored engineering principles that are foundational to building a learning health system that continuously learns and improves in terms of its effectiveness, efficiency, safety, and value. It considered how lessons from engineering could help to redesign all aspects of the system, from generating new knowledge to continuously reducing waste to providing safeguards that ensure consistently safe care (IOM and NAE, 2011).

Engineering a Learning Healthcare System

Common Understandings

- The system’s processes must be centered on the right target—the patient.

- System excellence is created by the reliable delivery of established best practices.

- Complexity compels reasoned allowance for tailored adjustments.

- Learning is a nonlinear process.

- Emphasize interdependence and tend to the process interfaces.

- Teamwork and cross-checks trump command and control.

- Performance, transparency, and feedback serve as the engine for improvement.

- Expect errors in the performance of individuals but perfection in the performance of systems.

- Align rewards on key elements of continuous improvement.

- Education and research can facilitate understanding and partnerships between engineering and the health professions.

- Foster a leadership culture, language, and style that reinforce teamwork and results.

SOURCE: IOM and NAE, 2011.

In the latter publication, from a co-sponsored IOM/NAE workshop, a number of common understandings emerged from the assessment by participants of various examples available at the time to illustrate variations on the approach to application of systems principles to health. An abbreviated synopsis follows (IOM and NAE, 2011).

- The system’s processes must be centered on the right target—the patient. Health care is by nature highly complex, involving multiple participants and parallel activities that sometimes take on a character of their own, independent of patient needs or desires. Here, patient needs and perspectives must be at the center of all process design, technology application, and clinician engagement.

- System excellence is created by the reliable delivery of established best practices. Identifying and embedding practices that work best, and developing the system processes to ensure their delivery every time, help to define excellence in system performance and to focus the system on delivering the best possible care for patients.

- Complexity compels reasoned allowance for tailored adjustments. Established routines may need circumstance-specific adjustments for differences in the appropriateness for various individuals, variations in caregiver skill, and the evolving nature of the science base—or all three. Mass customization and other engineering practices can help ensure a consistency that can in fact accelerate the recognition of the need for tailoring.

- Learning is a nonlinear process. The focus on an established hierarchy of scientific evidence as a basis for decision making cannot fully accommodate the fact that much of the sound learning in complex systems occurs in local and individual settings. There is a need to bridge the gap between dependence on formal trials, such as randomized clinical trials, and the experience of local improvement in order to speed learning and avoid impractical costs.

- Emphasize interdependence and tend to the process interfaces. A system is most vulnerable at the interfaces between and among critical processes. In health care, attention to the nature of relationships and handoffs between elements of the patient care and connected processes, such as administrative processes, are vital.

- Teamwork and cross-checks trump command and control. In systems designed to guarantee safety, system performance that is effective and efficient requires careful coordination and teamwork as well as a culture that encourages parity among all those with established responsibilities.

- Performance, transparency, and feedback serve as the engine for improvement. Continuous learning and improvement in patient care requires transparency in processes and outcomes as well as the ability to capture feedback and make adjustments.

- Expect errors in the performance of individuals, but perfection in the performance of systems. Human error is inevitable in any system and should be expected. On the other hand, resilient work systems, safeguards, and designed redundancies can deliver perfection in system performance, by mapping processes and embedding prompts, cross-checks, and information loops.

- Align rewards on the key elements of continuous improvement. Incentives, standards, and measurement requirements serve as powerful change agents, and it is vital that incentives be carefully considered and directed to the targets most important to improving the efficiency, effectiveness, systemic shortfalls, and challenges in health care today, reflecting changes needed and how systems engineering may help foster the health and safety of the system—and ultimately improve patient outcomes.

- Education and research can facilitate understanding and partnerships between engineering and the health professions. Common vocabularies, concepts, and ongoing joint education and research activities that help generate stronger questions and solutions will encourage greater collaborative work between them.

- Foster a leadership culture, language, and style that reinforce teamwork and results. A positive leadership culture fosters and celebrates consensus goals, teamwork, multidisciplinary efforts, transparency, and continuous monitoring and improvement—all of which require supportive and integrated leadership.

Systems Approaches to Health: Examples

The experiences of several organizations with impressive outcomes from application of systems approaches can be illustrative on the potential applications of systems tools to

- design health care operations to assure consistently high performance, such as using safeguards and redundancies, standard and resilient work processes, and elements that account for human factors;

- develop frameworks for understanding health care structures, processes, and outcomes, along with their relationships (Carayon et al., 2006);

- adopt measurement, feedback, and control tools for continuous improvement, adaptation and tailoring, and managing complex processes;

- apply modeling and simulation tools that highlight interconnections, potential failure points, and possible implications from different policy options; and

- discover new knowledge using data mining, predictive modeling, and other methods.

This section highlights six examples in which systems principles have been applied to significantly advance performance, including safety, quality, and value.

Examples of Systems Approaches to Health

Multiple systems approaches have the potential to improve health and health care, including:

- Human factors engineering

- Industrial and systems engineering

- Production system methods

- Modeling and simulation

- Predictive analytics

- Supply chain management

- Operations management and queuing theory

Case Study: Johns Hopkins University

The Armstrong Institute of Johns Hopkins University, in conjunction with the Gordon and Betty Moore Foundation, has launched a 2-year initiative seeking to improve care in the ICU. This initiative builds on prior work from this team, which has found that systems approaches can improve safety and patient outcomes in critical care environments. For example, one initiative spearheaded by the group found that checklists could reduce the incidence of catheter-related bloodstream infections, a potentially harmful complication occurring in many hospitals. These studies found that the checklist, when implemented across ICUs throughout an entire state, eliminated catheter-related bloodstream infections in the ICUs of most hospitals and resulted in an 80 percent decrease in infections per catheter-day (Pronovost et al., 2006a; Pronovost et al., 2009). If routinely used nationally, this type of tool could help to eliminate these infections, which claim almost 30,000 lives per year and cause approximately $2 billion in health care costs (Pronovost et al., 2006a).

The current initiative, called the Patient Care Program Acute Care Initiative, aims to enhance care quality and reduce patient harms in the intensive care unit. In critical care environments, multiple problems are common, such as deep venous thrombosis, ventilator-associated injuries, and central line-associated bloodstream infections. To prevent these common harms one at a time, a clinician would have to perform multiple preventive interventions for each potential harm, most of which need to be performed several times a day. Adding the number of preventive interventions together, it is estimated that a clinician would need to provide nearly 200 interventions every day under this type of ad hoc approach.

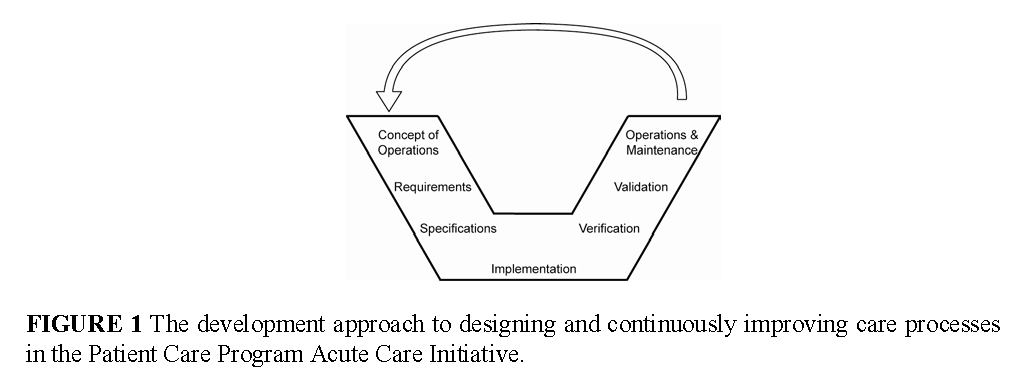

In contrast to the typical approach of tackling each harm one at time, the project seeks to take a systems perspective to eliminate all types of patient harm, including the harm of a loss of dignity and respect, and integrate preventive interventions directly into the clinical work flow. It intends to do so by reengineering the ICU using an interdisciplinary, patient-centered approach that integrates clinical information systems and clinical equipment, reengineers the care team workflows, and incorporates patient and family goals into routine care. A schematic of the systems engineering approach being used to design and continuously improve the clinical environment is illustrated in Figure 1.

One critical part of the project is developing technology platforms that coordinate and integrate various technologies and clinical processes. To improve reliability and consistency, one platform will include a dashboard that displays the status of necessary interventions to prevent harms—interventions that are due and intervention that have already been accomplished—and make that status visible to clinicians, patients, and families. To improve safety and productivity, another platform will integrate various medical technologies and electronic health data. The first phase of this platform will convey the orders in an electronic health record to the dose in an infusion pump for patients in critical care units. The initial platform will save considerable nursing time and effort, as the current process for adjusting an infusion dose requires a nurse to manually enter in the new dose level into the infusion pump based on the order in the electronic health record, and a second nurse to manually verify the accuracy of the order. Moreover, the platform will provide greater reliability and accuracy by eliminating the potential for human error. This application will be implemented in the surgical ICU at Johns Hopkins in the summer of 2013 and at the University of California, San Francisco, in the summer of 2014.

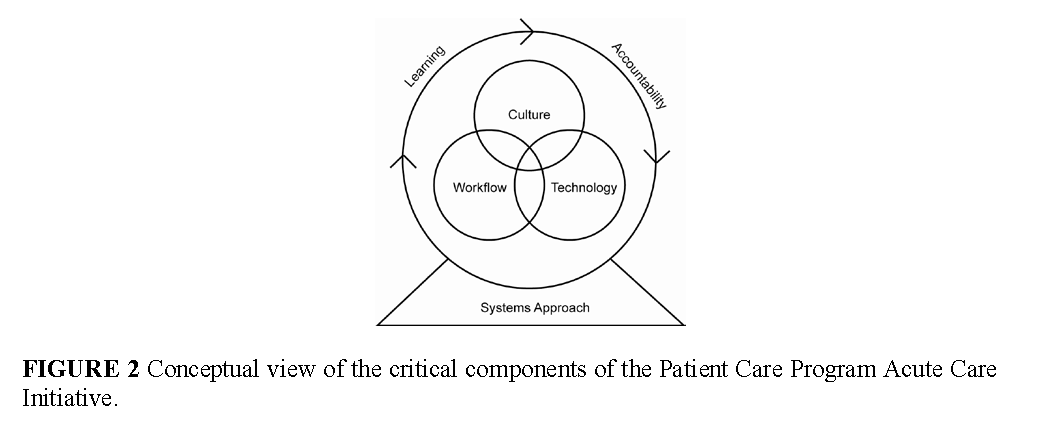

As technology by itself does not lead to sustainable change, this effort is coupled with culture change and teamwork interventions (Pronovost et al., 2005; Timmel et al., 2010). Therefore, the project will combine interventions to culture, technology, and workflow in order for new capabilities to emerge. The importance of combing these elements is represented in the conceptual framework for the project, as illustrated in Figure 2.

Case Study: Virginia Mason Health System

Research has found that management practices adopted from manufacturing sectors can improve the operations of health care organizations, resulting in better patient health outcomes. One recent study found that management practices were associated with improved care process measures and lower mortality for heart attack patients (McConnell et al., 2013). However, this same study pointed out that there was substantial diversity in the types of management practices used, and only one-fifth of institutions were fully implementing them according to best practices. This underscores both the potential for management practices and the challenges preventing these techniques from being consistently used in routine practice.

One institution that has implemented a management system across its entire operations is the Virginia Mason Health System. Virginia Mason uses the Virginia Mason Production System, which is inspired by the Toyota Production System. Although Virginia Mason has used production system methods in all departments, the case of its spine center is instructive for its results and for the challenges it faced. The spine center was encouraged to restructure its processes due to concerns about long wait times and high costs. The center began by mapping out the clinical pathways for its patients and discovered that care was inconsistent—some patients received advanced imaging, like magnetic resonance imaging (MRI) tests, and specialist care, while other similar patients directly received physical therapy. To improve, the clinic reviewed the literature on back pain treatments and developed a standard evidence-based process. Under this new process, patients with non-complicated back pain were directed immediately to physical therapy, and MRI scans and intensive evaluation were reserved for more complex cases. This new process aligns with clinical evidence showing that imaging for lower back pain is often overused, especially in clinical situations where it is unlikely to improve outcomes (Good Stewardship Working Group, 2011). Moreover, Virginia Mason found that this new way of delivering care reduced wait times, improved outcomes, and reduced costs (Blackmore et al., 2011; Fuhrmans, 2007).

Virginia Mason’s experience also reveals the challenges in adopting systems approaches. Implementing the evidence-based system across the organization has required substantial leadership support, backing from the governing board, and transforming the organization’s culture to sustain this work. Furthermore, the current payment system for health care can be an impediment. In the spine center example, the institution began to lose money after adopting the new clinical approach. This was because the institution was paid for high-cost imaging studies, which it was conducting less frequently, but was not paid for inexpensive follow-up care such as telephone consultations, which it was conducting more often. To sustain the improvement initiative, it had to negotiate with local insurers and employers to establish a new payment system for back pain (Blackmore et al., 2011; Fuhrmans, 2007). This experience highlights the multiple factors that can limit spreading systems approaches broadly.

Case Study: Vanderbilt University

Ventilator-associated pneumonia is a serious complication for patients in critical care environments, leading to death in 20 to 50 percent of patients affected (Pham et al., 2013). Preventing ventilator-associated pneumonia requires not just implementing specific preventive measures, but also consistently performing an entire bundle of interventions. When Vanderbilt University Medical Center examined how often it performed each of these preventive measures in its critical care units, it discovered that each individual intervention was conducted frequently, but performance was poor when implementing the entire bundle for every patient.

To improve the consistent delivery of the prevention bundle and reduce the rates of ventilator-associated pneumonia, Vanderbilt adopted systems engineering tools and implemented a feedback and control system. Specifically, the organization developed visual dashboards that showed every care team member the status of ventilator preventive measures for each patient—which measures had been done, which needed to be done, and which were overdue. The display was coupled with management reports, provided online and in real-time, that identified improvement opportunities across all patients by time and location (McConnell et al., 2013). As a result of these initiatives, average compliance with the ventilator bundle increased from 40 to 90 percent in 1 year, and rates of ventilator-acquired pneumonia dropped by over one-third during the same time period (IOM, 2012). The example highlights the potential of systems approaches to increase reliability and consistency in care, thereby improving safety and reducing errors.

Case Study: Veterans Health Administration (VHA)

In the early 1990s, health care provided by the Veterans Administration (VA) was widely criticized for uneven quality, fragmentation and limited access, poor customer service, and high cost. Based on these concerns, the VA implemented a system-wide reengineering effort to transform the way it delivered care. The reengineering occurred across multiple dimensions, including the system’s leadership, management processes, care coordination, quality improvement, incentives, resource allocation, and information technology and electronic health record capacity. The guiding principle throughout all of these improvement initiatives was to reliably provide high-quality, high-value, patient-centered care to all veterans served by the system (Kizer, 2011; Kizer and Dudley, 2009).

One of the key tools for the improvement efforts was the implementation of a system-wide electronic health record system called VistA (Veterans Health Information Systems and Technology Architecture). The transition built on prior IT infrastructure investments in order to provide digital patient records for all patients, with information flowing among all providers and facilities. The expanded digital infrastructure provided new clinical capabilities to ensure reliability, such as clinical alerts; new methods for sharing best practices and implementing new knowledge, such as clinical decision support; and new data sources for generating knowledge, such as clinical and administrative data repositories (Brown et al., 2003).

As a result of these efforts, the VHA improved its performance on multiple care quality measures, increased the use of evidence-recommended care, and increased the efficiency of its operations. Studies have found that the VA performs as well as, and often exceeds, the performance of other systems on measures ranging from prevention to management of chronic diseases to treating acute conditions (Asch et al., 2004; Jha et al., 2003; Perlin et al., 2004; Singh et al., 2010; Trivedi et al., 2011).

Case Study: Managing Patient Flow

Another set of initiatives used a technique from the operations research field to ensure that resources are available when patients need them in a hospital setting. It accomplishes this by examining how patients enter and move throughout the hospital, from their initial admission, through the different units of the hospital, until their discharge. Importantly, this must include scheduled cases (such as elective surgeries) and unscheduled cases (such as emergency admissions), as both contribute to variability in the hospital census. The patient data are analyzed using mathematical models, and the results of that analysis are used to adjust hospital processes, such as the daily operating room schedule, to lower variability in the flow of patients to different hospital units (Litvak and Bisognano, 2011).

By adopting these techniques, one hospital, Cincinnati Children’s Hospital, was able to improve care quality while simultaneously increasing surgical volume by 7 percent annually for 2 years, all without adding staff or increasing the number of hospital beds (Litvak and Bisognano, 2011). Similar results have been seen at another institution, Mayo Clinic in Florida, which implemented this methodology and was able to increase surgical volumes by 4 percent while decreasing variability by 20 percent, reducing staff turnover by 40 percent, and reducing overtime staffing by 30 percent (Smith et al., 2013).

Case Study: Human Factors Engineering with Electronic Health Records

Human factors engineering can help to identify potential safety, quality, and reliability challenges for technologies or processes by focusing on how humans will interact with them. In one example of this approach, a human factors analysis was performed on the medication ordering, dispensing, and administration processes, including a computer physician order entry (CPOE) system. The researchers for the study, at Centre Hospitalier Universitaire in Lille, France, analyzed the user interface to the CPOE system and identified multiple issues that limited the usability of the software. This was followed by a simulation of the typical nursing work environment, in which the participating nurses identified 28 usability issues during that test (Beuscart-Zephir et al., 2010; Carayon et al., 2013; Pelayo et al.). By identifying and correcting potential usability issues, the user interface can better support care processes and promote the efficiency and reliability of care.

As a second example, current medical devices produce a significant quantity of information for clinicians to review, and produce false alarms at a high frequency. These two factors have created a complex work environment in which clinicians have too much information to review and are unable to judge which alarms are truly critical. The situation has led the Joint Commission and professional societies to encourage health care leaders to examine alarm fatigue and to reconfigure device design, where appropriate, to limit alarms to situations in which they are clinically necessary.

Despite Examples of Success, Systems Approaches Remain Limited

Although current initiatives have had real effects on patient’s lives, most of these are point-based or disease-focused, and, on the grand scale of the health system, they are isolated micro-victories. These examples of success have also depended on an extraordinary combination of circumstances, leadership, culture, and resources. Multiple opportunities are now available for a systems approach, ranging from accountable care organizations to the new information ecosystem. An outstanding question is how these systems approaches can be spread more broadly, involving multiple health care organizations and integrating the health and health care systems.

Technological, Cultural, and Structural Barriers Prevent Widespread Use of Systems Approaches

Although cases of successful systems approaches to health and health care exist, little progress has been made in spreading systems approaches, with multiple barriers preventing widespread implementation of successful tools. One major challenge is the current incentive structure for health care, the fee-for-service payment model. This payment model pays for each specific health care service but does not pay for simple tasks like communicating with patients or coordinating care. As such, it discourages any improvement initiative that reduces the number of health care services performed, because it would also reduce revenue for the organization. Even more perversely, a hospital may see its margins decrease as a result of initiatives that reduce preventable harms (Hsu et al., 2013). This means that initiatives that improve patient outcomes at a lower cost can be unsustainable, and eventually threaten an organization’s long-term survival. Yet, changing the reimbursement system is only a first step, as sustainable improvement will require coupling reimbursement changes with a systems approach to redesigning care processes.

Another major challenge is the current culture of health care, which focuses on individual clinicians rather than systems and in which blame is the standard reaction to any error. As a result, this culture relies on the heroism of clinicians to prevent harm. The difficulty with this type of culture is that humans, no matter how experienced, skilled, or vigilant, will always make mistakes. This is magnified in chaotic health care environments that place substantial physical and mental stresses on clinicians, who are attempting to simultaneously manage the care needs of multiple patients. Moving away from blame allows an organization to learn from mistakes and conduct systematic improvement efforts based on that knowledge. Moreover, this cultural shift will allow systems to be built that recognize and account for inevitable human error, and provide redundancies and cross-checks that maintain safety regardless.

Furthermore, organizational culture is a necessary prerequisite for the successful implementation and sustainability of improvement initiatives (Garvin et al., 2008; Klein and Sorra, 1996). Some organizational cultures, particularly those that are overly hierarchical or have punitive responses toward any failure, may not support transparency, standardization, or other factors that a systems approach demands. As with any change to the provision of care, a strong culture of collaboration and communication in which teamwork, creativity, and innovation are valued is foundational to success. For example, one study found that a culture in which staff felt empowered to report safety concerns was critical to reducing catheter-related bloodstream infections in critical care environments (Pronovost et al., 2006a; Pronovost et al., 2006b; Vigorito et al., 2011). In a similar vein, one study found that organizations that promoted staff buy-in to systems tools by training their clinical and nonclinical staff in process improvement had the strongest improvements, as this technique gave everyone in the organization the ability to apply systems-based problem solving and promoted more extensive use of these tools (Edwards et al., 2011; Lukas et al., 2007).

Several studies have demonstrated the significant impact that an organization’s culture can have on its performance. For example, after implementing an initiative focused on teamwork, coaching, and communication skills in selected facilities, a large, multi-facility health system found that its mortality rates decreased by 18 percent and its adverse events continued to decrease, while non-participating facilities only saw a 7 percent mortality reduction (IOM, 2012; Neily et al., 2011; Neily et al., 2010). Another study found that the top hospitals for heart attack outcomes were characterized by a commitment to learning and improvement, had senior management involvement in its initiatives, and had non-punitive approaches to problem-solving (Curry et al., 2011).

In a complementary fashion, supportive leadership is required for successful implementation and sustainable solutions. By defining and emphasizing that a systems approach is an organizational goal, leadership at all levels can encourage all parts of the organization to implement this approach as a routine part of care. Leaders have multiple tools at their disposal to promote systems concepts, including the ability to raise their visibility, prioritize their use, align expectations and provide a shared vision, and ensure that the necessary resources—in terms of time, staff, and finances—are provided. Studies have demonstrated the impact that executive leadership can have—one study found that hospitals whose leader was strongly involved in improvement initiatives often provided higher-quality care (Vaughn et al., 2006).

An additional challenge to the routine use of systems approaches is the cultural difference between the engineering and health disciplines. Many clinicians and public health officials may not be aware of the potential benefits of system-based improvements, especially in clinical areas, and the cultural divide between the disciplines may not be one that clinicians consciously recognize. Furthermore, the two fields use different terminologies and view problems with different conceptual frameworks, making communications between the fields difficult. Finally, the incentive structures for the fields differ. Engineering faculty do not have academic incentives to pursue health care challenges, as their academic incentives are focused on solving new, thorny problems—as opposed to applying existing solutions to health care problems.

Further collaborations among clinicians, industry, and engineers are important to ensure that engineering lessons are applied to medical technologies, including human factors engineering principles that can improve the usability of a device. One example initiative that advances this goal was announced earlier this year by nine different device makers, who pledged to develop devices that were interoperable and able to share patient health information to reduce harm (Patient Safety, 2013).

Greater expertise in systems methods also influences its technical success. System tools can rarely be applied in a cookbook fashion, but generally need to be understood in order to be customized to local conditions and needs. Ensuring that the necessary expertise is available for such customization can be done in several ways, from increasing the number of engineers involved in routine care redesign to embedding systems techniques in health professional education. Further improvements to clinical education and continuing education can improve the application of systems tools, such as teaching methods for applying evidence to clinical decision making, how to deliver care in an interdisciplinary team environment, and how to continue learning new methods for providing care (AAMC, 2011; Lucian Leape Institute Roundtable On Reforming Medical Education, 2010).

One additional foundational element is an expanded digital infrastructure that can routinely capture health and health care data, share such data with those who need it, and provide feedback based on current research. By leveraging the capabilities of a digital infrastructure, systems of care can be redesigned to improve their operational processes and patient health needs; initiatives can be evaluated for their effectiveness and efficiency at improving health; and new research and evidence can be quickly communicated to clinicians and public health officials. Furthermore, the new digital infrastructure provides new sources of information about the effectiveness of new clinical treatments, interventions, and process improvements, and can supplement the knowledge gained from the existing clinical research enterprise.

What Are the Critical Factors for Successfully Applying a Systems Perspective to Health?

There are multiple prerequisites for implementing a systems approach, including

- Reimbursement systems that reward value and outcomes

- Supportive culture and organizational leadership

- Expanded digital infrastructure that captures essential data elements

- Collaborations among clinicians, public health officials, engineers, and industry

- Embedding engineering expertise in care delivery and clinical education

Success Depends on Centering Initiatives on Patients and the Public

For the success of systems-based initiatives, the importance of one factor deserves special emphasis: centering the initiatives around patients and the public. This is important as the goal of any improvement initiatives should be centered on the individual served—whether it is a person in the community, a patient, or a consumer—and improving his or her health and care experience. Moreover, individuals play critical roles in managing their health outside of clinical encounters, from managing complex treatment regimens to everyday decisions on nutrition and exercise. Finally, individual patients and the public can be vital partners in implementing systems tools and techniques by highlighting how these tools work on the ground and providing feedback on whether these tools improve their care experience or aid their health maintenance.

The imperative for systems approaches to center their efforts on patients follows a similar obligation for the broader health system. This obligation has been known for many years; the criticality of patient-centered care was highlighted more than a decade ago in the IOM report Crossing the Quality Chasm (IOM, 2001). Yet, this type of care is still not routinely delivered, and the typical culture of care does not support or encourage patients to engage in their health and health care (Berwick, 2009). Patients find that clear communication is often lacking, with less than half of patients receiving understandable information on the benefits and tradeoffs of their treatment options (Fagerlin et al., 2010; Sepucha et al., 2010). Furthermore, patients are often not engaged in their medical care to the extent they prefer, with almost half of patients reporting that they are not satisfied with their current level of participation in medical decisions (Blue Shield of California Foundation, 2012; Degner et al., 1997; Singh et al., 2010). Improving patient engagement depends on addressing the multiple system factors that move the focus of care away from patients, such as the current incentive structure, health care culture, and clinical environment.

The recent IOM report Best Care at Lower Cost noted that a “learning health care system is anchored on patient needs and perspectives and promotes the inclusion of patients, families, and other caregivers as vital members of the continuously learning care team” (IOM, 2012). It further found that improved patient engagement was linked to better patient experiences, health outcomes, quality of life, and reduced costs, yet patient and family involvement in care was limited. Patient engagement is a relatively broad concept, and presents a significant challenge for the culture of care. As articulated in Best Care at Lower Cost, patient engagement requires a true partnership between clinicians and patients, with clinicians providing scientific expertise on treatment options while patients provide their knowledge on the suitability of care options based on their needs, goals, and circumstances (IOM, 2012).

Beyond the obligation to focus initiatives on patients, patients and the public are important actors in any improvement process. Several institutions have successfully included patients in safety initiatives, and these cases have reported reduced medical errors or increased hand hygiene with patient involvement (Davis et al., 2007; Longtin et al., 2010; Weingart et al., 2005; Weingart et al., 2011). Another institution reported positive results from including patients in systematic improvement activities, such as value-stream mapping and production-system methods, in order to ensure that value was measured from the patient perspective (Toussaint, 2009). Yet another institution involved families in the analysis and redesign of family-centered rounds in a pediatric hospital (Carayon et al., 2011; Kelly et al., 2013; Xie et al., 2012). The Dana-Farber Cancer Institute has seen positive results from involving patients and families throughout its organizational governance structures, from involving patients in continuous improvement teams to asking for input into institutional policies through patient and family advisory councils (Ponte et al., 2003). These cases highlight the potential for greater partnering with patients and the broader public in implementing systems approaches across the health system.

Spreading Systems Approaches Depends on Technology, Leadership, Culture, and Greater Learning

The various examples in which systems tools have been applied successfully to health and health care underscores the potential of this approach. Yet, multiple barriers now prevent their routine use. To address the barriers, multiple strategies are needed, and we include several strategies below to spark discussion among policymakers in health and health care arenas.

Increase the generation and dissemination of systems knowledge. Sharing best practices across organizations and learning from successful cases can increase the potential success of systems approaches.

- Develop materials that summarize how other fields have successfully applied systems concepts and what they have learned.

- Establish multidisciplinary learning labs with close collaboration between health care and engineering to promote communication between the disciplines.

- Develop a research agenda to stimulate innovation in systems approaches and understand the factors limiting the use of these approaches.

Provide the necessary technological supports for systems approaches. Although technologies alone cannot reform broken processes, systems tools cannot work without an interoperable, integrated technological infrastructure.

- Promote the growth of digital records that capture the necessary data for process redesign, routine care and health maintenance, and evaluating success.

- Improve the interoperability, usability, usefulness, and integration of different technologies by adopting standards for interoperability of medical devices and human factors methods.

Support system-based initiatives with appropriately structured financial incentives. Financial incentives currently discourage improvement efforts and can make those initiatives unsustainable.

- Promote payment methods that reward improvement and better health outcomes.

Expand expertise in systems methods throughout the health system. Increasing technical knowledge about systems approaches can allow for greater application of these methods, improve communications between health and engineering professionals, and promote greater customization of these tools to local conditions.

- Integrate systems concepts into the education of health professionals, including the standard curriculum for medicine, nursing, and other clinical providers, with a focus on how they may be applied to improve care (IOM and NAE, 2005; Spear, 2006).

- Expand educational opportunities for engineering professionals to apply their field to health and health care delivery in order to enhance their ability to integrate into health care organizations (Carayon, 2010; Xiao and Fairbanks, 2011)

Prioritize the key opportunities for progress. Although there are numerous areas where systems methods could be used to improve health and health care, progress will be accelerated by developing priorities for greater attention.

- Identify the health conditions and health care processes that would be most amenable to prevention and management using a systems approach. Given the potential for systems approaches across the health care landscape, the areas for its application are wide-ranging, including primary care; chronic care management, such as type 2 diabetes care; emergency medicine; obstetrics; and mental health.

- Outline how a systems approach could address problems in the patient and family care experience, such as the loss of dignity and respect.

Each of these strategies can individually increase the use of systems tools, but greater progress will depend on a comprehensive approach that addresses the many underlying challenges preventing their use.

Conclusion

It is clear that urgent change is needed to improve the health system, given its safety, quality, cost, and complexity challenges. One method for addressing these challenges is through a systems approach to improvement. A systems approach has improved quality and value in other industries, and it could be similarly transformative for health and health care. Indeed, a limited number of health care organizations have seen substantial improvements from their application. In order to be applied to health, a systems approach would need to incorporate all of the elements influencing health, including the interfaces among these different elements. Because of its comprehensive nature, there are multiple challenges preventing the widespread use of systems approaches, such as technological, cultural, and structural barriers. Furthermore, progress in spreading systems tools depends on centering these initiatives on patients and the public, as well as engaging patients as vital partners in their use. Addressing the barriers preventing the routine use of systems approaches will require a comprehensive set of strategies, including the interoperability of technologies, expanding expertise, and greater dissemination of best practices. By addressing these barriers, systems approaches can become routine for improving the health of all Americans and promoting better health at lower cost.

References

- AAMC. 2011. Behavioral and social science foundations for future physicians. Washington, D.C. Available at: https://www.aamc.org/system/files/d/1/271020-behavioralandsocialsciencefoundationsforfuturephysicians.pdf (accessed May 19, 2020).

- Agwunobi, J., and P. A. London. 2009. Removing costs from the health care supply chain: Lessons from mass retail. Health Affairs (Millwood) 28(5):1336-1342. https://doi.org/10.1377/hlthaff.28.5.1336

- Asch, S. M., E. A. McGlynn, M. M. Hogan, R. A. Hayward, P. Shekelle, L. Rubenstein, J. Keesey, J. Adams, and E. A. Kerr. 2004. Comparison of quality of care for patients in the veterans health administration and patients in a national sample. Annals of Internal Medicine 141(12):938-945. https://doi.org/10.7326/0003-4819-141-12-200412210-00010

- Baker, D. P., R. Day, and E. Salas. 2006. Teamwork as an essential component of high-reliability organizations. Health Services Research 41(4 Pt 2):1576-1598. https://doi.org/10.1111/j.1475-6773.2006.00566.x

- Berwick, D. M. 2009. What ‘patient-centered’ should mean: Confessions of an extremist. Health Affairs (Millwood) 28(4):w555-565. https://doi.org/10.1377/hlthaff.28.4.w555

- Beuscart-Zephir, M. C., S. Pelayo, and S. Bernonville. 2010. Example of a human factors engineering approach to a medication administration work system: Potential impact on patient safety. International Journal of Medical Informatics 79(4):e43-57. https://doi.org/10.1016/j.ijmedinf.2009.07.002

- Blackmore, C. C., R. S. Mecklenburg, and G. S. Kaplan. 2011. At Virginia Mason, collaboration among providers, employers, and health plans to transform care cut costs and improved quality. Health Affairs (Millwood) 30(9):1680-1687. https://doi.org/10.1377/hlthaff.2011.0291

- Blue Shield of California Foundation. 2012. Empowerment and engagement among low-income Californians: Enhancing patient-centered care. http://www.blueshieldcafoundation.org/sites/default/files/publications/downloadable/empowerment%20and%20engagement_final.pdf (accessed April 4, 2013).

- Bohmer, R. 2010. Virginia Mason Medical Center (abridged). Cambridge, MA: Harvard Business School.

- Brown, S. H., M. J. Lincoln, P. J. Groen, and R. M. Kolodner. 2003. VistA–U.S. Department of Veterans Affairs national-scale HIS. International Journal of Medical Informatics 69(2-3):135-156. https://doi.org/10.1016/s1386-5056(02)00131-4

- Bureau of Transportation Statistics. 2011. National transportation statistics. Washington, D.C.: Research and Innovation Technology Administration, U.S. Department of Transportation.

- Carayon, P. 2010. Human factors in patient safety as an innovation. Applied Ergonomics 41(5):657-665. https://doi.org/10.1016/j.apergo.2009.12.011

- Carayon, P., L. L. DuBenske, B. C. McCabe, B. Shaw, M. E. Gaines, M. M. Kelly, J. Orne, and E. D. Cox. 2011. Work system barriers and facilitators to family engagement in rounds in a pediatric hospital. In Healthcare systems ergonomics and patient safety 2011, edited by S. Albolino, S. Bagnara, T. Bellandi, J. Llaneza, G. Rosal and R. Tartaglia. Boca Raton, FL: CRC Press. Pp. 81-85.

- Carayon, P., A. S. Hundt, B. T. Karsh, A. P. Gurses, C. J. Alvarado, M. Smith, and P. F. Brennan. 2006. Work system design for patient safety: The SEIPS model. Quality & Safety in Health Care 15(Supplement I):i50-i58. https://doi.org/10.1136/qshc.2005.015842

- Carayon, P., A. Xie, and K. S. 2013. Human factors and ergonomics. In Making health care safer ii: An updated critical analysis of the evidence for patient safety practices. Comparative effectiveness review no. 211. AHRQ Publication No.13-E001-EF, edited by P. G. Shekelle, R. Wachter, P. Pronovost, K. Schoelles, K. McDonald, S. Dy, K. Shojania, J. Reston, Z. Berger, B. Johnsen, J. Larkin, S. Lucas, K. Martinez, A. Motala, S. Newberry, M. Noble, E. Pfoh, S. Ranji, S. Rennke, E. Schmidt, R. Shanman, N. Sullivan, F. Sun, K. Tipton, J. Treadwell, A. Tsou, M. Vaiana, S. Weaver, R. Wilson and B. Winters. Rockville, MD: Agency for Healthcare Research and Quality. Pp. 325-350.

- Chassin, M. R., and J. M. Loeb. 2011. The ongoing quality improvement journey: Next stop, high reliability. Health Affairs (Millwood) 30(4):559-568. https://doi.org/10.1377/hlthaff.2011.0076

- Classen, D. C., R. Resar, F. Griffin, F. Federico, T. Frankel, N. Kimmel, J. C. Whittington, A. Frankel, A. Seger, and B. C. James. 2011. ‘Global trigger tool’ shows that adverse events in hospitals may be ten times greater than previously measured. Health Affairs (Millwood) 30(4):581-589. https://doi.org/10.1377/hlthaff.2011.0190

- Curry, L. A., E. Spatz, E. Cherlin, J. W. Thompson, D. Berg, H. H. Ting, C. Decker, H. M. Krumholz, and E. H. Bradley. 2011. What distinguishes top-performing hospitals in acute myocardial infarction mortality rates? A qualitative study. Annals of Internal Medicine 154(6):384-390. https://doi.org/10.7326/0003-4819-154-6-201103150-00003

- Davis, R. E., R. Jacklin, N. Sevdalis, and C. A. Vincent. 2007. Patient involvement in patient safety: What factors influence patient participation and engagement? Health expectations: an international journal of public participation in health care and health policy 10(3):259-267. https://doi.org/10.1111/j.1369-7625.2007.00450.x

- Degner, L. F., L. J. Kristjanson, D. Bowman, J. A. Sloan, K. C. Carriere, J. O’Neil, B. Bilodeau, P. Watson, and B. Mueller. 1997. Information needs and decisional preferences in women with breast cancer. JAMA 277(18):1485-1492.

- Donchin, Y., D. Gopher, M. Olin, Y. Badihi, M. Biesky, C. L. Sprung, R. Pizov, and S. Cotev. 1995. A look into the nature and causes of human errors in the intensive care unit. Critical Care Medicine 23(2):294-300. https://doi.org/10.1097/00003246-199502000-00015

- Donchin, Y., and F. J. Seagull. 2002. The hostile environment of the intensive care unit. Current Opinions in Critical Care 8(4):316-320. https://doi.org/10.1097/00075198-200208000-00008

- Edwards, J. N., S. Silow-Carroll, and A. Lashbrook. 2011. Achieving efficiency: Lessons from four top-performing hospitals. New York, NY: Commonwealth Fund.

- Fagerlin, A., K. R. Sepucha, M. P. Couper, C. A. Levin, E. Singer, and B. J. Zikmund-Fisher. 2010. Patients’ knowledge about 9 common health conditions: The DECISIONS survey. Medical Decision Making 30(5 Suppl):35S-52S. https://doi.org/10.1177/0272989X10378700

- Farrell, D., E. Jensen, B. Kocher, N. Lovegrove, F. Melhem, L. Mendonca, and B. Parish. 2008. Accounting for the cost of US health care: A new look at why Americans spend more. Washington, DC: McKinsey Global Institute.

- Fuhrmans, V. 2007. A novel plan helps hospital wean itself off pricey tests. Wall Street Journal, January 12. Available at: https://www.wsj.com/articles/SB116857143155174786 (accessed May 19, 2020).

- Garvin, D. A., A. C. Edmondson, and F. Gino. 2008. Is yours a learning organization? Harvard Business Review 86(3):109. Available at: https://hbr.org/2008/03/is-yours-a-learning-organization (accessed May 19, 2020).

- Good Stewardship Working Group. 2011. The “top 5” lists in primary care: Meeting the responsibility of professionalism. Archives of Internal Medicine 171(15):1385-1390. https://doi.org/10.1001/archinternmed.2011.231

- Hammer, M. 2004. Deep change. How operational innovation can transform your company. Harvard Business Review 82(4):84-93, 141. Available at: https://hbr.org/2004/04/deep-change-how-operational-innovation-can-transform-your-company (accessed May 19, 2020).

- Hillestad, R., J. Bigelow, A. Bower, F. Girosi, R. Meili, R. Scoville, and R. Taylor. 2005. Can electronic medical record systems transform health care? Potential health benefits, savings, and costs. Health Affairs (Millwood) 24(5):1103-1117. https://doi.org/10.1377/hlthaff.24.5.1103

- Hoffman, A., and E. J. Emanuel. 2013. Reengineering US health care. JAMA 309(7):661-662. https://doi.org/10.1001/jama.2012.214571

- Hsu, E., D. Lin, S. J. Evans, K. S. Hamid, K. D. Frick, T. Yang, P. J. Pronovost, and J. C. Pham. 2013. Doing well by doing good: Assessing the cost savings of an intervention to reduce central line-associated bloodstream infections in a Hawaii hospital. American Journal of Medical Quality 29(1): 13-9. https://doi.org/10.1177/1062860613486173

- Institute of Medicine. 2001. Crossing the Quality Chasm: A New Health System for the 21st Century. Washington, DC: The National Academies Press. https://doi.org/10.17226/10027.

- Institute of Medicine. 2010. The Healthcare Imperative: Lowering Costs and Improving Outcomes: Workshop Series Summary. Washington, DC: The National Academies Press. https://doi.org/10.17226/12750.

- Institute of Medicine. 2011. Digital Infrastructure for the Learning Health System: The Foundation for Continuous Improvement in Health and Health Care: Workshop Series Summary. Washington, DC: The National Academies Press. https://doi.org/10.17226/12912.

- Institute of Medicine. 2013. Best Care at Lower Cost: The Path to Continuously Learning Health Care in America. Washington, DC: The National Academies Press. https://doi.org/10.17226/13444.

- Institute of Medicine and National Academy of Engineering. 2011. Engineering a Learning Healthcare System: A Look at the Future: Workshop Summary. Washington, DC: The National Academies Press. https://doi.org/10.17226/12213.

- National Academy of Engineering and Institute of Medicine. 2009. Systems Engineering to Improve Traumatic Brain Injury Care in the Military Health System: Workshop Summary. Washington, DC: The National Academies Press. https://doi.org/10.17226/12504.

- National Academy of Engineering and Institute of Medicine. 2005. Building a Better Delivery System: A New Engineering/Health Care Partnership. Washington, DC: The National Academies Press. https://doi.org/10.17226/11378.

- Jha, A. K., J. B. Perlin, K. W. Kizer, and R. A. Dudley. 2003. Effect of the transformation of the Veterans Affairs health care system on the quality of care. New England Journal of Medicine 348(22):2218-2227. https://doi.org/10.1056/NEJMsa021899

- Kaplan, G. S., and S. H. Patterson. 2008. Seeking perfection in healthcare. A case study in adopting Toyota Production System methods. Healthcare Executive 23(3):16-18, 20-11.

- Kellermann, A. L., and S. S. Jones. 2013. What it will take to achieve the as-yet-unfulfilled promises of health information technology. Health Affairs (Millwood) 32(1):63-68. https://doi.org/10.1377/hlthaff.2012.0693

- Kelly, M. M., A. Xie, P. Carayon, L. L. DuBenske, M. L. Ehlenbach, and E. D. Cox. 2013. Strategies for improving family engagement during family-centered rounds. Journal of Hospital Medicine 8(4):201-207. https://doi.org/10.1002/jhm.2022

- Kenney, C. 2008. The best practice : How the new quality movement is transforming medicine. 1st ed. New York: Public Affairs.

- ———. 2011. Transforming health care: Virginia Mason Medical Center’s pursuit of the perfect patient experience. Boca Raton: CRC Press.

- Kizer, K. W. 2011. Veterans health affairs: Transforming the Veterans Health Administration. In Engineering a learning healthcare system, edited by C. Grossman, W. A. Goolsby, L. Olsen and J. M. McGinnis. Washington, DC: National Academies Press.

- Kizer, K. W., and R. A. Dudley. 2009. Extreme makeover: Transformation of the Veterans Health Care System. Annual Review of Public Health 30:313-339. https://doi.org/10.1146/annurev.publhealth.29.020907.090940

- Klein, K. J., and J. S. Sorra. 1996. The challenge of innovation implementation. Academy of Management Review 21(4):1055-1080. https://doi.org/10.2307/259164

- Kocher, R., and N. R. Sahni. 2011a. Rethinking health care labor. New England Journal of Medicine 365(15):1370-1372. https://doi.org/10.1056/NEJMp1109649

- Landrigan, C. P., G. J. Parry, C. B. Bones, A. D. Hackbarth, D. A. Goldmann, and P. J. Sharek. 2010. Temporal trends in rates of patient harm resulting from medical care. New England Journal of Medicine 363(22):2124-2134. https://doi.org/10.1056/NEJMsa1004404

- Levinson, D. R. 2010. Adverse events in hospitals: National incidence among medicare beneficiaries. Washington, D.C.: U.S. Department of Health and Human Services, Office of Inspector General.

- ———. 2012. Hospital incident reporting systems do not capture most patient harm. Washington, D.C.: U.S. Department of Health and Human Services, Office of Inspector General.

- Litvak, E., and M. Bisognano. 2011. More patients, less payment: Increasing hospital efficiency in the aftermath of health reform. Health Affairs (Millwood) 30(1):76-80. https://doi.org/10.1377/hlthaff.2010.1114

- Longtin, Y., H. Sax, L. L. Leape, S. E. Sheridan, L. Donaldson, and D. Pittet. 2010. Patient participation: Current knowledge and applicability to patient safety. Mayo Clinic Proceedings 85(1):53-62. https://doi.org/10.4065/mcp.2009.0248

- Lucian Leape Institute Roundtable On Reforming Medical Education. 2010. Unmet needs: Teaching physicians to provide safe patient care. Boston, MA: National Patient Safety Foundation.

- Lukas, C. V., S. K. Holmes, A. B. Cohen, J. Restuccia, I. E. Cramer, M. Shwartz, and M. P. Charns. 2007. Transformational change in health care systems: An organizational model. Health Care Management Review 32(4):309-320. https://doi.org/10.1097/01.HMR.0000296785.29718.5d

- Martin, A. B., D. Lassman, B. Washington, A. Catlin, and T. National Health Expenditure Accounts. 2012. Growth in US health spending remained slow in 2010; Health share of gross domestic product was unchanged from 2009. Health Affairs (Millwood) 31(1):208-219. https://doi.org/10.1377/hlthaff.2011.1135

- McConnell, K., R. C. Lindrooth, D. R. Wholey, T. M. Maddox, and N. Bloom. 2013. Management practices and the quality of care in cardiac units. JAMA Internal Medicine: 1-9. https://doi.org/10.1001/jamainternmed.2013.3577

- McGlynn, E. A., S. M. Asch, J. Adams, J. Keesey, J. Hicks, A. DeCristofaro, and E. A. Kerr. 2003. The quality of health care delivered to adults in the United States. New England Journal of Medicine 348(26):2635-2645. https://doi.org/10.1056/NEJMsa022615

- Nance, J. J. 2011. Airline safety. In Engineering a learning healthcare system: A look at the future (workshop summary), edited by C. Grossmann, W. A. Goolsby, L. Olsen and J. M. McGinnis. Washington, D.C.: National Academies Press.

- Neily, J., P. D. Mills, N. Eldridge, B. T. Carney, D. Pfeffer, J. R. Turner, Y. Young-Xu, W. Gunnar, and J. P. Bagian. 2011. Incorrect surgical procedures within and outside of the operating room: A follow-up report. Archives of Surgery 146(11):1235-1239. https://doi.org/10.1001/archsurg.2011.171

- Neily, J., P. D. Mills, Y. Young-Xu, B. T. Carney, P. West, D. H. Berger, L. M. Mazzia, D. E. Paull, and J. P. Bagian. 2010. Association between implementation of a medical team training program and surgical mortality. JAMA 304(15):1693-1700. https://doi.org/10.1001/jama.2010.1506

- Patient Safety, Science, and Technology Summit. 2013. 2013 summit overview. http://www.patientsafetysummit.org/2013/ (accessed June 3, 2013).

- Pelayo, S., F. Anceaux, J. Rogalski, P. Elkin, and M.-C. Beuscart-Zephir. A comparison of the impact of CPOE implementation and organizational determinants on doctor-nurse communications and cooperation. International Journal of Medical Informatics [Epub]. http://dx.doi.org/10.1016/j.ijmedinf.2012.09.001

- Perlin, J. B., R. M. Kolodner, and R. H. Roswell. 2004. The Veterans Health Administration: Quality, value, accountability, and information as transforming strategies for patient-centered care. American Journal of Managed Care 10(11 Pt 2):828-836. Available at: https://pubmed.ncbi.nlm.nih.gov/15609736/ (accessed May 19, 2020).

- Pham, J. C., K. D. Frick, and P. J. Pronovost. 2013. Why don’t we know whether care is safe? American Journal of Medical Quality. Available at: https://psnet.ahrq.gov/issue/why-dont-we-know-whether-care-safe (accessed May 19, 2020).

- Ponte, P. R., G. Conlin, J. B. Conway, S. Grant, C. Medeiros, J. Nies, L. Shulman, P. Branowicki, and K. Conley. 2003. Making patient-centered care come alive: Achieving full integration of the patient’s perspective. Journal of Nursing Administration 33(2):82-90. https://doi.org/10.1097/00005110-200302000-00004

- Pronovost, P., D. Needham, S. Berenholtz, D. Sinopoli, H. Chu, S. Cosgrove, B. Sexton, R. Hyzy, R. Welsh, G. Roth, J. Bander, J. Kepros, and C. Goeschel. 2006a. An intervention to decrease catheter-related bloodstream infections in the ICU. New England Journal of Medicine 355(26):2725-2732. https://doi.org/10.1056/NEJMoa061115

- Pronovost, P., B. Weast, B. Rosenstein, J. B. Sexton, C. G. Holzmueller, L. Paine, R. Davis, and H. R. Rubin. 2005. Implementing and validating a comprehensive unit-based safety program. Journal of Patient Safety 1(1):33-40. Available at: https://psnet.ahrq.gov/issue/implementing-and-validating-comprehensive-unit-based-safety-program (accessed May 19, 2020).

- Pronovost, P. J., S. M. Berenholtz, C. A. Goeschel, D. M. Needham, J. B. Sexton, D. A. Thompson, L. H. Lubomski, J. A. Marsteller, M. A. Makary, and E. Hunt. 2006b. Creating high reliability in health care organizations. Health Services Research 41(4 Pt 2):1599-1617. https://doi.org/10.1111/j.1475-6773.2006.00567.x

- Pronovost, P. J., C. A. Goeschel, K. L. Olsen, J. C. Pham, M. R. Miller, S. M. Berenholtz, J. B. Sexton, J. A. Marsteller, L. L. Morlock, A. W. Wu, J. M. Loeb, and C. M. Clancy. 2009. Reducing health care hazards: Lessons from the commercial aviation safety team. Health Affairs (Millwood) 28(3):w479-489. https://doi.org/10.1377/hlthaff.28.3.w479

- Roberts, K. H., and D. M. Rousseau. 1989. Research in nearly failure-free, high-reliability organizations – having the bubble. IEEE Transactions on Engineering Management 36(2):132-139. Available at: https://www.theisrm.org/documents/Roberst%20&%20Rouseau%201989%20-%20Research%20in%20Nearly%20Failure%20Free%20High%20Reliability%20Organizations%20-%20Having%20the%20Bubble.pdf (accessed May 19, 2020).

- Rochlin, G. I., T. R. La Porte, and K. H. Roberts. 1987. The self-designing high-reliability organization: Aircraft carrier flight operations at sea. Naval War College Review (Autumn):76-90. Available at: https://digital-commons.usnwc.edu/cgi/viewcontent.cgi?referer=https://www.google.com/&httpsredir=1&article=4373&context=nwc-review (accessed May 19, 2020).

- Sage, A. P., and C. D. Cuppan. 2001. On the systems engineering and management of systems of systems and federations of systems. Information, Knowledge, Systems Management 2(4):325-345. Available at: https://content.iospress.com/articles/information-knowledge-systems-management/iks00045 (accessed May 19, 2020).

- Schoen, C., K. Davis, S. K. How, and S. C. Schoenbaum. 2006. U.S. health system performance: A national scorecard. Health Affairs (Millwood) 25(6):w457-475. https://doi.org/10.1377/hlthaff.25.w457

- Semel, M. E., A. M. Bader, A. Marston, S. R. Lipsitz, R. E. Marshall, and A. A. Gawande. 2010. Measuring the range of services clinicians are responsible for in ambulatory practice. Journal of Evaluation in Clinical Practice. https://doi.org/10.1111/j.1365-2753.2010.01598.x

- Sepucha, K. R., A. Fagerlin, M. P. Couper, C. A. Levin, E. Singer, and B. J. Zikmund-Fisher. 2010. How does feeling informed relate to being informed? The DECISIONS survey. Medical Decision Making 30(5 Suppl):77S-84S. https://doi.org/10.1177/0272989X10379647

- Singh, J. A., J. A. Sloan, P. J. Atherton, T. Smith, T. F. Hack, M. M. Huschka, T. A. Rummans, M. M. Clark, B. Diekmann, and L. F. Degner. 2010. Preferred roles in treatment decision making among patients with cancer: A pooled analysis of studies using the control preferences scale. American Journal of Managed Care 16(9):688-696. Available at: https://www.ajmc.com/journals/issue/2010/2010-09-vol16-n09/ajmc_10sep_singh_688to696 (accessed May 19, 2020).

- Smith, C. D., T. Spackman, K. Brommer, M. W. Stewart, M. Vizzini, J. Frye, and W. C. Rupp. 2013. Re-engineering the operating room using variability methodology to improve health care value. Journal of the American College of Surgeons 216(4):559-568. https://doi.org/10.1016/j.jamcollsurg.2012.12.046

- Spear, S. J. 2006. Fixing healthcare from the inside: Teaching residents to heal broken delivery processes as they heal sick patients. Academic Medicine 81(10 Suppl):S144-149. https://doi.org/10.1097/00001888-200610001-00034

- Spear, S. J., and H. K. Bowen. 1999. Decoding the DNA of the Toyota Production System. Harvard Business Review. Available at: https://hbr.org/1999/09/decoding-the-dna-of-the-toyota-production-system (accessed May 19, 2020).

- Timmel, J., P. S. Kent, C. G. Holzmueller, L. Paine, R. D. Schulick, and P. J. Pronovost. 2010. Impact of the comprehensive unit-based safety program (CUSP) on safety culture in a surgical inpatient unit. Joint Community Journal of Quality and Patient Safety 36(6):252-260. https://doi.org/10.1016/s1553-7250(10)36040-5

- Toussaint, J. 2009. Writing the new playbook for U.S. Health care: Lessons from Wisconsin. Health Affairs (Millwood) 28(5):1343-1350. https://doi.org/10.1377/hlthaff.28.5.1343

- Trivedi, A. N., S. Matula, I. Miake-Lye, P. A. Glassman, P. Shekelle, and S. Asch. 2011. Systematic review: Comparison of the quality of medical care in Veterans Affairs and non-Veterans Affairs settings. Medical Care 49(1):76-88. https://doi.org/10.1097/MLR.0b013e3181f53575

- U.S. Navy. 2013. Carrier. Available at: http://www.navy.mil/navydata/ships/carriers/powerhouse/powerhouse.asp (accessed June 4, 2013).

- Vaughn, T., M. Koepke, E. Kroch, W. Lehrman, S. Sinha, and S. Levey. 2006. Engagement of leadership in quality improvement initiatives: Executive quality improvement survey results. Journal of Patient Safety 2(1). Available at: http://www.ihi.org/resources/Pages/Publications/EngagementofLeadershipinQISurveyResults.aspx (accessed May 19, 2020).

- Vigorito, M. C., L. McNicoll, L. Adams, and B. Sexton. 2011. Improving safety culture results in Rhode Island ICUs: Lessons learned from the development of action-oriented plans. Joint Community Journal of Quality and Patient Safety 37(11):509-514. https://doi.org/10.1016/s1553-7250(11)37065-1

- Walker, J., and P. Carayon. 2009. From tasks to processes: The case for changing health information technology to improve health care. Health Affairs (Millwood) 28(2):467. https://doi.org/10.1377/hlthaff.28.2.467